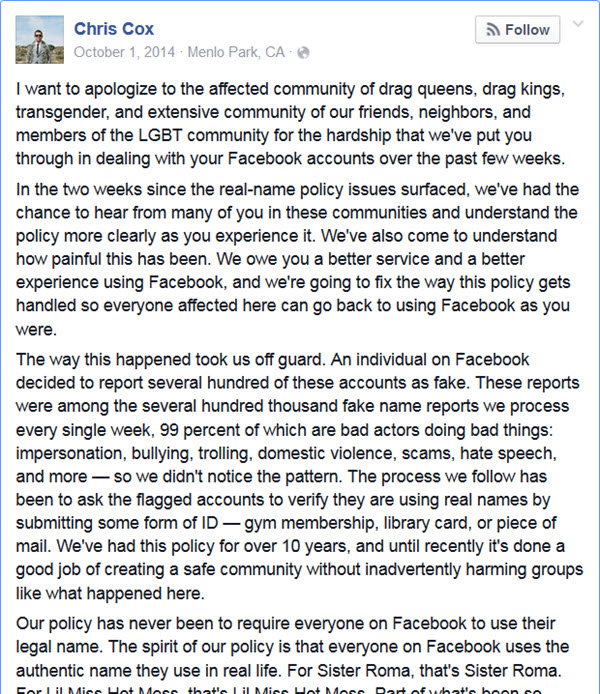

It was nice of Chris Cox to post an explanation of Facebook’s name policy and apologize to “the affected community of drag queens, drag kings, transgender, and extensive community of our friends, neighbors, and members of the LGBT community for the hardship that we’ve put you through in dealing with your Facebook accounts over the past few weeks.”

It was nice of Chris Cox to post an explanation of Facebook’s name policy and apologize to “the affected community of drag queens, drag kings, transgender, and extensive community of our friends, neighbors, and members of the LGBT community for the hardship that we’ve put you through in dealing with your Facebook accounts over the past few weeks.”

Except that the post doesn’t honestly explain Facebook’s name policy. The real purpose of the policy is to force you to use a name on Facebook that can be matched to the name you use to make transactions – such as the one on your credit card – so they can correlate the ads you’ve been shown to purchases you make in the real world and charge the advertiser more money. This is why in the old wording of the policy they asked for the same documents they match against – driver license, credit card, etc.

Facebook’s name policy results in two classes of user. The ones who can be matched to their offline activity are in a High-Revenue tier. Those whose real names don’t match the ID they use for real world transactions are in a Low-Revenue tier. (These are not Facebook’s terms, simply descriptive of the relative difference in revenue.)

Facebook trolls delight in reporting people they do not like for violations resulting in removal of individual posts or entire accounts and the LGBT community are favorite targets. Although this was always a big problem for the victims, it wasn’t a big problem for Facebook until the trolls organized a mass reporting spree, giving the victims a cluster of incidents that were impossible to ignore and finally the story was picked up by the news media and popular press.

Last year, in response to negative press about the impact of the Real Name policy in the LGBT community, Facebook reluctantly changed the policy wording to ask for your “authentic name.” Ironically, I still cannot use my authentic name with the correct spelling and Facebook has declined to respond to my complaint, presumably since doing so won’t put me into the High-Revenue tier. The account name Facebook forces me to use does not match any of the articles, presentations, blog posts or the book that I’ve published in my professional life over the 20+ years I’ve been writing about IBM MQ, which is the name I’ve gone by since I was a teenager.

A Facebook page openly coordinating a malicious reporting campaign

was not deemed to violate Facebook’s Terms of Service

But the worst part of all of this, and the thing that makes it obvious what Facebook’s true intent is here, is that Facebook changed the policy but didn’t fix the reporting and enforcement side. I personally experienced this when their initial response to my request to remove the “FB Time-Outs for Provaxers” page was that it didn’t violate their Terms of Service. This is incredible considering that the page was set up to coordinate a mass reporting spree targeting pro-vaccination activists and declared this openly in the title of the page! Reporting sprees are a well-known strategy used by activists and their opponents and clearly violate Facebook’s Terms of Service, yet the page I tried to report wasn’t removed until a counter-reporting spree was organized. Facebook’s toleration of this kind of abuse is particularly grievous when the activists in question are a hate group, such as in the recent case reported by the EFF where the reporting spree was directed against Native Americans and committed on Indigenous People’s Day.

Why focus on putting in exceptions for affected groups and not on fixing the abuse of the reporting system? Stack Overflow uses a reputation economy to address abuse and it works very well. Twitter verifies accounts. Web of trust verifies through, well, a web of trust. Facebook could fix the abuse but that would require it to value the welfare of its users more than it values the revenue generated through ignoring the harm inflicted on them.

Incidentally, Facebook does have verified profiles but only for “Celebrities and public figures,” “Global brands and businesses,” and “Media.” Clearly, Facebook differentiates for groups that are subject to above average trolling and abuse. If your group is glamorous, lucrative and mainstream you get the additional protection of a verified account. If your group is societally marginalized Facebook will reluctantly make an exception to harm you less, once you prove that you matter by getting news media or popular press to take up your cause.

Aside: It is unclear under which category Chris Cox, who is Facebook’s Chief Product Officer, qualifies for a verified account considering that most of my local news anchors and more than half of the bestselling authors I looked up haven’t been granted that distinction. What is clear is that although all people are created equal, at Facebook some are more equal than others.

If the welfare of the community were Facebook’s top priority as they claim, the focus and dialog would be about stopping malicious reporting. Instead what we see is Facebook turning its attention one at a time to each Low-Revenue tier group of people who can rally and get some publicity, while ignoring the harm done to any group lacking sufficient influence to get some press. First it was drag queens and the larger LGBT community. Today it is Native Americans, and they might not have shown up on Facebook’s radar if not for the stunt in which they were targeted on Indigenous People’s Day. How long will Facebook keep playing Whack-A-Mole every time a new group pops up to claim harm and instead just fix the name policy and the abuse of the reporting system for everyone?

Facebook, and Chris Cox in particular, this is an open challenge:

If you are going to keep screwing us, at least admit that is about the money and nothing to do with our safety and welfare. Between the High and Low-revenue tiers there’s a middle tier. If you simply asked people to voluntarily provide matching data, many would. It isn’t as lucrative as earning revenue off the backs of victims of malicious reporting but at least it would be honest.

Or else prove that the welfare of your users is your top priority by fixing the abuse of the reporting system. For starters, how about not allowing accounts less than [x] months old to report and permanently banning accounts that make malicious reports? Then circle back and create a true reputation-based system with ways to build trust and earn privileges, with various levels of violation reporting along that reputation scale.

But whatever you choose, please fix the broken names policy and stop telling us that policies which inflict harm on our community are enacted and enforced for our safety. Clearly you tell us this in hopes of preserving our trust and your reputation. It’s having the opposite effect.

Trust. Reputation. You’re doing it wrong.

T.Rob (Not T-Rob)